Computer Limit and Computerized Systems

Computer systems, whether computers, telephones, or any unit capable of processing information, are becoming faster and faster as technology evolves.

How far can this increase physically go? Read more about this computer limit and computerized systems.

Our computers use silicon integrated circuits. These circuits are serious on the material, and what determines the power and the speed of calculation is essentially the number and the size of the transistors.

The more these are small, numerous and close to each other, the faster the calculations are made.

As technology evolves, these transistors are engraved more and more finely. The rate of reductions in size is such that it takes between 1.5 and 2 years to double the power of computing units: this is called Moore’s law, which has held up pretty well until now.

Moore’s law is not a law of physics, but rather a statement of our rate of improving our techniques, and a day will come when Yon will no longer have the possibility of doing better: the transistors will be too small to work, or else it will become impossible to engrave them more finely.

The purpose of this article is not to study what the future holds for us, nor to see the limits of Moore’s law, but rather to see the limits that it will never be possible to exceed no matter what, from a purely physical rather than a technical point of view.

To give an idea: current computers, of the “laptop” type, perform a few hundred billion operations per second (10^11 op./s), consume a hundred watts of power and can store around ‘one terabyte of data (10^12 bytes, or about 10^13 unique bits).

All in an object of about 1 kilo. These values are of course rounded.

Today, data is stored in the form of electrical charges on a transistor, but one can also imagine storing information in the form of the quantum state of a molecule (0 for a folded molecule, and 1 for a molecule delice, for example).

Changing the state of the molecule will then consume energy and take time. In addition, the information must be stored on a medium and therefore occupy a certain volume.

Landauer’s limit (or principle) and the Margolus-Levitin theorem on computer limit

The first limit to study is that of Lenergie: see how many operations can be performed, at most, with each joule of energy?

This limit is known as Landauer’s limit (or principle). It says that for each bit change operation (0 which becomes 1, or 1 which becomes 0), an entropic loss takes place, that is to say that part of (Energy necessary to modify the information 0 ouest lost in heat.

Landauer’s limit is sometimes disputed, but it nevertheless gives an idea: 0.0175 eV per modified bit (at 20 °C or 68 °F).

Read also:

For a contemporary computer working at this limit, the power consumption would be only 0.3 nanowatt hours per second. A current PC which would function at this limit could carry out 10^21 op./s, that is to say 10 billion times more than they do today, with the same electric power.

Not bad!

Another limitation related to this is the time it takes to change a bit in a computer. If a binary state is modified, it means that the energy level in the information carrier (molecule, transistor…) has been changed, and therefore that energy is conveyed from one place to another .

But energy cannot move infinitely quickly in space and time, so there is a limit. This idea is supported by the Margolus-Levitin theorem.

This theorem says that a quantum state change operation will necessarily take a longer time than a given limit, given the energy one is willing to expend to perform these operations.

This limit is set at 10^33 operations per second and per joule. In other words, the energy of one joule moved is sufficient to carry out 10^33 operations per second, which is again relatively considerable and we are still very far from being there.

A last interesting limit is to see what information density our ultimate computer can store.

Today, our transistors measure a few tens of nanometers (the indigestible etching finenesses on the labels, such as “7 nm etching” do not physically correspond to the size of the transistors and are only an equivalent trade name, but let’s ignore that for simplicity). In a chip of 1 cm3, we could, today, store 1 billion gigabits, therefore 125,000 terabytes.

However, even if we begin to store bits in a three-dimensional network (TLC-type SSD technology for example), our storage is still essentially on the surface of the silicon chips, not in volume.

We can go much lower than engravings at 7 nm: we imagine, for example, storing data in DNA: the choice of the nucleic bases that constitute it then composes the code that must be read to retrieve the information.

Basically, now, the ultimate storage limit will depend on the number of quantum states that an entity (molecule, atom…) can carry and transpose in a given volume.

This quantity is the Bekenstein limit and is of the order of 10^42 bits per kilogram of matter.

Incidentally, the human brain of a weight of the order of a kilogram could therefore not be fully described (up to the quantum level) without a quantity of information of this order of magnitude as well.

It therefore seems again rather improbable to succeed in simulating the human brain in the near future: 10^42 bits exceeds by 6 orders of magnitude the total quantity of information emitted by humanity since its beginnings.

No mention has been made here of anything relating to the transmission of data or the technologies used (that was not the goal). There are also a lot of approximations, but the idea is to put numbers on an “ultimate computer” that we could do in view of the currently known limits.

Thus, we can say that we will never have a laptop with more than 10^42 bit of memory (i.e. 10^29 To), being able to perform more than 10^33 operations per second, while consuming less than current PCs.

Sources: PinterPandai

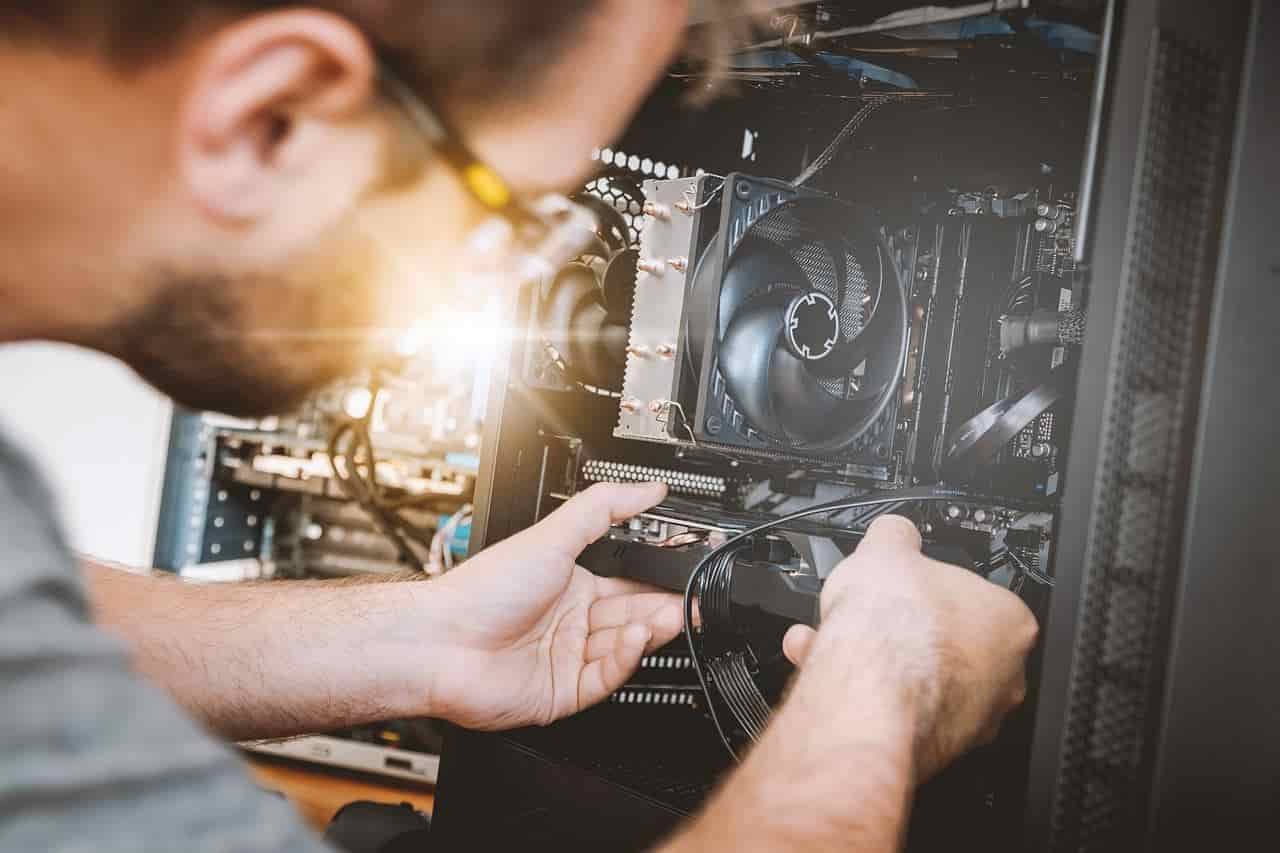

Photo credit: JESHOOTS-com via Pixabay